Is there no stopping the AI spending spree?

Anticipate datacentre spending to extend tenfold. That was among the many claims Nvidia CEO Jensen Huang made throughout the firm’s newest quarterly earnings name. He forecast that capital expenditure (CapEx) on datacentres would improve from the $300-400bn mark as we speak to $3-4tn by 2030.

Huang’s remarks on the finish of February got here a number of days after Microsoft’s AI Tour London occasion, when CEO Satya Nadella successfully known as for enterprise software program builders to make use of the capabilities now constructed into Microsoft 365 to create agentic AI workflows for streamlining enterprise processes.

Nadella mentioned the necessity to have an environment friendly token manufacturing facility, the place phrases or tokens could be streamed into AI engines that interpret pure language for querying massive language fashions (LLMs). The Microsoft imaginative and prescient of enterprise AI is constructed on the M365 basis, which acts as a information retailer on which a brand new class of knowledge-based software program could be constructed.

Throughout his keynote presentation, Nadella spoke concerning the intelligence that exists within the varied IT programs used throughout the enterprise. He stated that companies ought to be capable to harness the intelligence that already exists enterprise-wide, beginning with what he described because the “information beneath Microsoft 365”, which, in line with Nadella, represents the folks within the enterprise, their relationship to coworkers, and work artefacts akin to tasks, calendars and communications information. “That is large info,” he stated, which can be utilized to bootstrap agentic AI tasks.

“Our objective is to have all the innovation and the programs obtainable within the token manufacturing facility,” stated Nadella. “That method you may construct software program which has the power to make use of all the functionality [we provide] to coach fashions and ship fashions for inference.”

In impact, he sees the Home windows software program developer ecosystem evolving to the place it’s now a Microsoft 365 ecosystem, the place enterprise information is saved in AI-enabled workplace productiveness instruments akin to Phrase, Excel, PowerPoint, Groups and Outlook, and these can be utilized as the muse for a brand new era of purposes that may draw on these AI information sources.

It’s this concept that each one software program will should be knowledge-aware, which Huang spoke about throughout the firm’s earnings name. “Token era is on the centre of virtually every thing that pertains to software program sooner or later and pertains to computing,” he stated. “In the event you have a look at the best way we use computing previously, nonetheless, the quantity of computation demand for software program previously is a tiny fraction of what’s vital sooner or later.”

In keeping with Huang, the quantity of computation essential to run AI is 1,000 occasions larger than the computing energy wanted to run non-AI software program. “The computing demand is only a lot larger,” he stated. “And so, if we proceed to imagine there’s worth in it, then the world will make investments to supply that token.”

When requested whether or not Nvidia is assured that its clients will proceed to have the power to spend extra on AI infrastructure, which might impression Nvidia’s capability to develop, Huang spoke concerning the alternative in enterprises to utilize agentic AI and its widespread usefulness throughout organisations.

“We’ve got now seen the inflection of agentic AI, and the usefulness of brokers internationally and enterprises in all places,” he stated. “You’re seeing unimaginable compute demand due to it. On this new world of AI, compute is revenues. With out compute, there’s no option to generate tokens. With out tokens, there’s no option to develop revenues.”

At the very least, that’s how he positioned AI for the funding financial institution analysts on the earnings name. The corporate posted fourth quarter income of $68bn, up 73% year-over-year. Datacentre income elevated by 75% to $62bn, which Nvidia stated was being pushed by demand for its Blackwell structure and AI inference deployments. It additionally reported networking income of $1bn, up 3.5x year-over-year, fuelled by adoption of NVLink, Spectrum X and different Nvidia ethernet applied sciences.

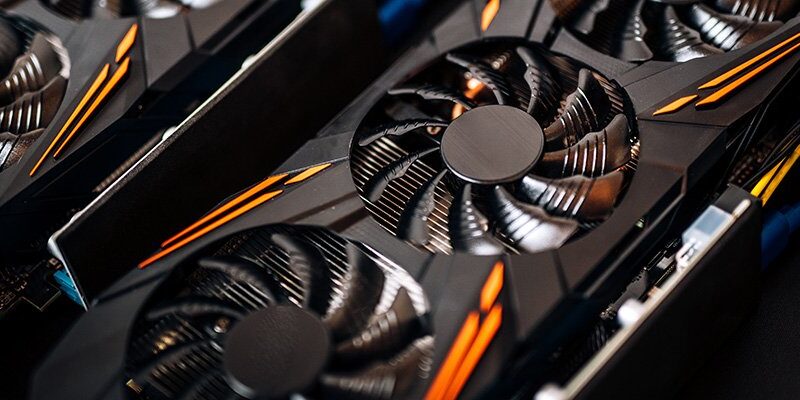

Final yr, throughout his keynote presentation on the GTC convention within the US, Huang claimed that the bottom price per token was being achieved utilizing the most costly GPU – which on the time was the Grace Blackwell NVLink 72.

Nvidia describes the GB200 Grace Blackwell as a “superchip”, which connects two high-performance Nvidia Blackwell Tensor Core GPUs and the Nvidia Grace CPU with the NVLink-Chip-to-Chip (C2C) interface, able to delivering 900 GBytes/s of bidirectional bandwidth.

Considerably, the structure implies that purposes have coherent entry to a unified reminiscence house. In keeping with Nvidia, this simplifies programming and helps the bigger reminiscence wants of trillion-parameter LLMs, transformer fashions for multimodal duties, fashions for large-scale simulations, and generative fashions for 3D information.

‘Huang’s Regulation’

Some business observers have coined the time period “Huang’s Regulation” to explain his perspective of how every new era of GPU delivers a 10x improve in efficiency, in contrast with Moore’s Regulation’s doubling of efficiency each 18 months.

Nadella and Huang each spoke about how newer {hardware} is extra energy-efficient at working AI workloads. In the course of the Microsoft AI tour, Nadella famous that as we speak’s system helps a completely completely different reminiscence hierarchy, which he stated means “there’s now no latency with AI inference”.

The messaging from each the Microsoft and Nvidia chiefs is that one of the best effectivity is achieved by profiting from the capabilities obtainable in these new programs. “There’s an unbelievable renaissance occurring with these programs and workloads, whether or not they’re coaching workloads or inference workloads, they’re in contrast to something we’ve seen previously,” stated Nadella.

The tech sector is useless set on getting enterprises to undertake an increasing number of AI. It’s being constructed into knowledge-aware enterprise software program seemingly to attract on the capabilities obtainable within the latest era of AI acceleration {hardware}.

Clearly, the enterprise fashions of Microsoft and Nvidia are tied to elevated demand for AI. However it’s also obvious that the price of deploying superior AI programs just isn’t going to get any cheaper. If something, capital expenditure on datacentres will proceed to extend at an exceptional fee, fuelled by demand for these new AI programs and the AI acceleration {hardware} they want.