Putting in an AI assistant in your PC? 5 do’s and don’ts

Abstract created by Good Solutions AI

In abstract:

- PCWorld examines important security practices for brand new private AI assistants like Claude Cowork and Perplexity’s Private Pc that provide intensive desktop management capabilities.

- These AI instruments can manipulate information, execute instructions, and entry system directories, creating important safety dangers if customers grant improper permissions or share delicate knowledge.

- Key suggestions embody designating restricted workspace folders, utilizing planning modes for job assessment, and avoiding AI entry to confidential paperwork like tax returns or financial institution particulars.

An AI that may rename your screenshots, manage your receipts, tidy up your notes, and construct apps, all whilst you’re busy with different issues and even sleeping?

Depend me in.

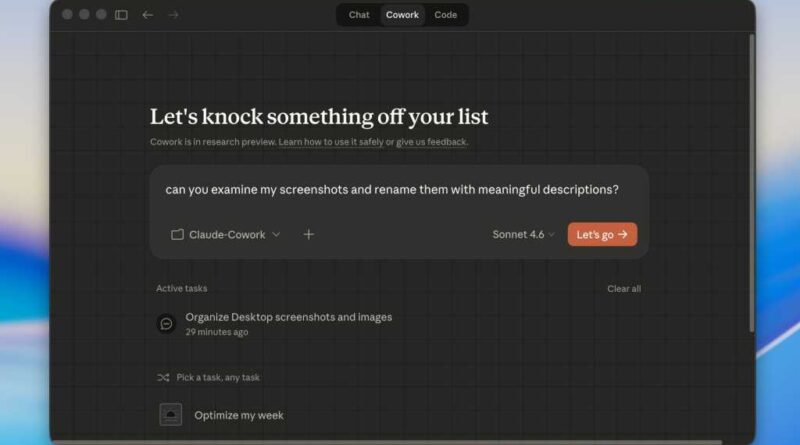

From Claude Cowork to Perplexity’s Private Pc and Manus’ My Pc, there’s been a bumper crop of private AI assistants that dwell in your PC and can take cost of your desktop. Apart from the same old integrations with Gmail, Outlook, and Excel, these apps can truly manipulate and edit your information, or execute “shell” instructions that give them unprecedented entry to your system.

In contrast to OpenClaw, the viral open-source AI software that kicked off the entire private AI agent craze, Claude Cowork and the newer desktop apps from Perplexity and Meta-owned Manus come from huge business AI gamers, every with one-click installers (that means no messing round with GitHub) and modern person interfaces.

All that spit and polish could make you assume that these new private AI assistants are completely protected to make use of. They’re not.

Identical to OpenClaw, Claude Cowork, Perplexity’s Private Pc, and Manus’ My Pc are all able to wreaking havoc in your system should you allow them to. Give them entry to the unsuitable listing or allow them to hearth off instructions with out the right oversight, and you possibly can have a multitude in your fingers.

Don’t get me unsuitable; Claude Cowork and its rivals are able to some eye-popping productiveness feats when used appropriately, and I’ll be attending to their coolest methods quickly. However first, let’s cowl some fundamental security ideas, beginning with…

Don’t give your AI assistant entry to a high-level listing

One of many first issues Claude Cowork will ask you to do is designate a folder as its “workspace.” When you select a folder, your AI assistant could have full entry to the information inside, together with any subdirectories plus the information inside these subdirectories.

Now, once I say full entry, I imply the AI can learn the information, index them, and use them as context for answering questions. It may even rename, edit, or delete them.

Ben Patterson/Foundry

All that performance can result in some extremely highly effective workflows (renaming and organizing whole directories of screenshots is one among them), however with the unsuitable immediate, your AI might wipe swaths of information straight away or get entry to delicate information that you just need to maintain hidden.

So, no matter you do, don’t give Claude Cowork, Perplexity’s Private Pc, or one other AI software entry to, say, your Paperwork listing. That’s simply asking for hassle.

As a substitute, give it entry to a smaller folder that’s additional down the listing tree–or, even higher, grant it entry to a contemporary, unpopulated listing, after which add information and folders that you just’re comfy with it touching.

Don’t let it entry delicate paperwork

It might be tempting to let your private AI assistant have at it together with your financial institution statements, tax returns, or different delicate paperwork, nevertheless it’s a foul concept.

Whereas some AI assistant instruments like Claude Cowork gained’t prepare their fashions in your knowledge, your file might nonetheless be in danger from “prompt-injection” assaults–that’s, information with hidden prompts that might trick Claude or one other AI into importing delicate info to the attacker.

For that cause, you must assume twice earlier than including something with private identifiers corresponding to social safety numbers, checking account numbers, or anything you don’t need falling into the unsuitable fingers.

An amazing tip I’ve picked up is that this: Earlier than granting your AI assistant entry to a file, ask your self whether or not you’d be comfy placing the file right into a chat app. If the reply’s no, then maintain that file out of your AI’s workspace.

Do put your AI assistant on a good leash

The identical goes for AI coding brokers. Most private AI assistants will ask you what degree of oversight you’d like over their actions.

On the extra cautious finish of the spectrum, you might be able to approve each command earlier than your AI executes it–or, on the opposite finish, you possibly can throw warning to the wind, permitting your assistant to carry out its instructions autonomously whilst you sleep.

Sustaining approval over your AI assistant’s each transfer is, in fact, the most secure possibility, nevertheless it’s additionally probably the most tedious and it’s possible you’ll rapidly develop aggravated by having to click on “approve” for each motion. Nonetheless, giving your AI full rein over its actions may very well be a recipe for catastrophe should you give it unsuitable or imprecise directions.

The bottom line is discovering an affordable center floor–one that enables the AI to behave like a real autonomous assistant with out merely letting it free. For instance, you possibly can enable Cowork or one other AI assistant to carry out sure instructions corresponding to read-only ones, by itself (“At all times Enable”), whereas conserving doubtlessly damaging instructions on a must-approve (“Enable As soon as”) foundation.

Do ask for a plan

A brand new and welcome development in AI coding instruments are “planning” modes, the place the agent can map out intimately what it’s going to do earlier than it does it. The identical factor is feasible with private AI assistants.

As a substitute of ordering them to rename all of the information in your workspace after which crossing your fingers, give them a immediate like, “Devise a plan for renaming all of the screenshots in my office listing; don’t implement the plan but, however keep in pencil-and-paper mode,” then let the AI element the way it will proceed.

Look over the plan rigorously and make any wanted modifications earlier than giving your approval.

Do again up your knowledge

Even inside a sandboxed workspace, it’s doable for Claude Cowork and different private AI assistants to deprave or delete your information unintentionally, and whereas the metadata of your information could also be preserved, the precise knowledge might not be.

For that cause, it’s crucial that you just again up any mission-critical information earlier than permitting your AI to control them. If that isn’t possible, then maybe you must maintain your can’t-lose knowledge out of your AI’s workspace.