Why Microsoft must be frightened about M5 MacBooks

Abstract created by Sensible Solutions AI

In abstract:

- PCWorld highlights how Apple’s new M5 Professional and M5 Max chips in MacBook Execs pose a big risk to Microsoft’s AI ambitions with their superior native AI processing capabilities.

- The M5 Max gives as much as 128GB unified reminiscence, far exceeding opponents like Nvidia RTX 5090’s 32GB, enabling massive AI fashions to run regionally with enhanced privateness and diminished latency.

- Apple’s built-in neural accelerators inside GPU cores and MLX framework create a extra environment friendly AI platform than Microsoft’s Home windows ML strategy.

Apple’s Mac Minis have change into the darlings for operating native AI. Apple’s newest MacBook Execs with its new Apple M5 Professional and Apple M5 Max chips may bewitch builders even additional.

Apple debuted its new MacBook Execs this week, combining a pair of 3-nm CPU dies with an interconnect between the 2. Macworld’s Jason Cross famous that the dual-die design isn’t new; AMD processors just like the Ryzen 9 5950X or the 7950X3D use a pair of chiplets linked with its Infinity Cloth interconnect.

What’s new about Apple’s M5 chips are the inclusion of “tremendous cores” and “efficiency cores.” Some — the renamed “tremendous cores” — is branding, as Jason factors out. Different bits, like the brand new efficiency cores, stay considerably unknown. However what’s actually fascinating is that they’re paired with between 20- and 40-core GPUs, every with a neural accelerator inside, in addition to as much as a whopping 128GB of configurable unified reminiscence. That’s one thing that your Home windows PC doesn’t do, and brings Apple prospects a number of benefits.

Proper now, we don’t know a number of the particulars surrounding Apple’s newest chips {that a} deep dive would reveal, maybe on the Scorching Chips chip convention this August. However simply the top-down options inform us sufficient that we are able to marvel at what Apple is doing.

Reminiscence issues, and the M5 Max MacBooks ship in spades

Let’s discuss essentially the most spectacular facet immediately: the reminiscence. Apple’s M5 Max chip features a 48GB of unified reminiscence customary, as much as a whopping 128GB. Apple’s configuration web page is a bit of complicated, however it seems that all the 16-inch MacBook Professional laptops are configurable to that capability, although for a whopping $4,399 worth. However that’s a unified reminiscence configuration.

For years, Home windows laptops powered by AMD and Intel chips included devoted VRAM: half of the obtainable system RAM in an Intel laptop computer, and a hard and fast model inside a Ryzen pocket book as properly. When AMD introduced the Ryzen AI Max processor for native AI, it tweaked its Adrenalin software program to permit customers to regulate the VRAM on the fly. Final August, Intel debuted the “Shared GPU Reminiscence Override,” which mainly did the identical. Qualcomm, whose Arm processors are the closest to the M5 Professional and Max from an structure standpoint, don’t supply that functionality.

Apple’s MacBooks can be used for greater than AI, after all, like ray tracing.

Apple

Apple’s M5 chips use what it calls MLX, an open-source array framework that seems to take native AI to a different degree. MLX, as Apple explains, doesn’t require the person to determine how a lot reminiscence to allocate. It doesn’t essentially even make the selection itself. As an alternative, MLX, which inbuilt assist for neural community coaching and inference, together with textual content and picture era, can “run on both the CPU or the GPU while not having to maneuver reminiscence round.” To make use of an outdated cliche, it simply works.

AI fashions gobble up the quickest reminiscence obtainable in your PC, which usually is the video reminiscence related together with your GPU. The finest AI fashions are sometimes essentially the most advanced: greater is healthier, and AI fashions use the variety of parameters as a common metric of how good they’re. However these fashions additionally want a variety of reminiscence to run in, too — identical to Home windows gained’t run in a PC with a measly 2GB of RAM, and why Adobe Photoshop requires considerably extra.

So, as a subtotal: Apple’s new M5 MacBook Execs embrace as much as 128GB of accessible reminiscence, the overwhelming majority of which is obtainable to the GPU — on this case, the AI engine. That’s way over the native VRAM related to essentially the most highly effective PC graphics card, the Nvidia RTX 5090, which has 32GB of VRAM connected to it. And when you assume that $4,399 is approach an excessive amount of to pay for an AI field, the $3,099 MacBook Professional contains 48GB of unified reminiscence, customary. You get the concept.

Apple’s MacBooks, then, ought to be capable of each load and run native AI fashions that will usually be compelled to run within the cloud. Meaning no latency, no subscription prices, no knowledge leaving the machine for functions ruled by strict privateness legal guidelines. Apple’s personal estimates (under) present the reminiscence comparisons of assorted standard fashions, and they need to simply run on the MacBook. Double the reminiscence allocation on the Qwen3 mannequin, and a 70 billion parameter mannequin must be doable. Even cooler, Apple revealed just a few traces of code to quantize, or compress, a mannequin all the way down to a decrease precision. That’s slick.

However Apple’s design additionally has some spectacular efficiency traits, too. Processors from AMD, Intel, and Qualcomm every embrace a devoted, unified GPU. Apple doesn’t: as a substitute, it places an NPU (or no less than a “neural accelerator”) inside every of its GPU cores. We all know that these neural accelerators carry out particular devoted matrix-multiplication operations essential to machine studying, and that Apple additionally has a separate 16-core neural engine that presumably is its identify for a devoted NPU. We don’t actually know the way it all interacts with each other.

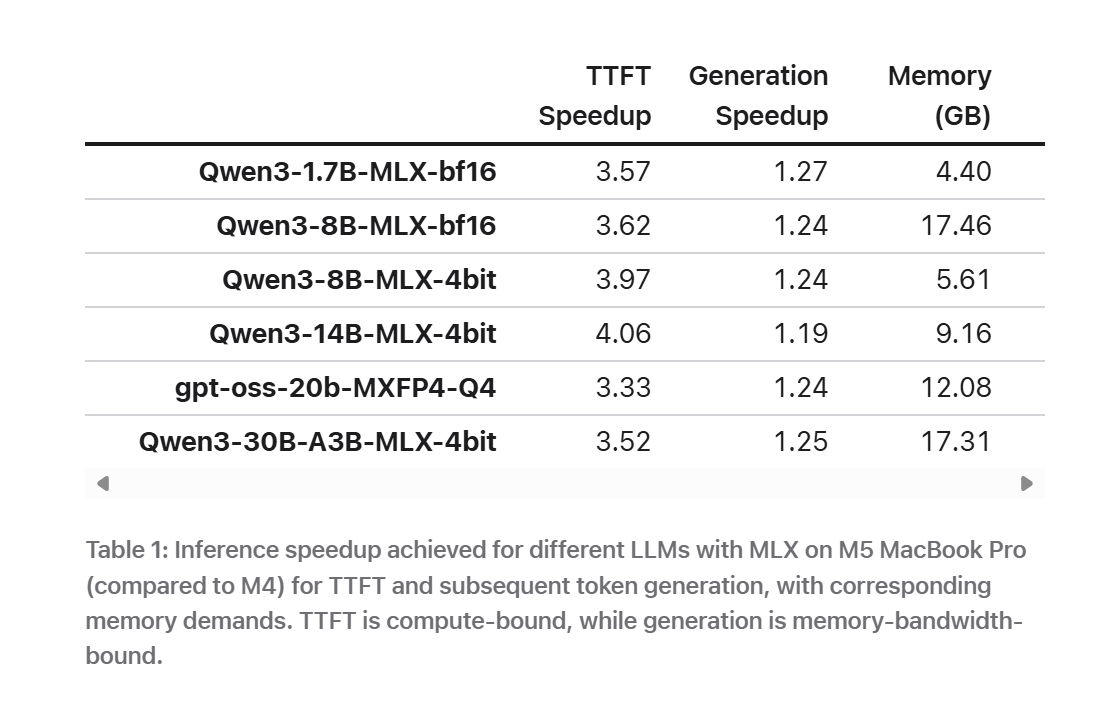

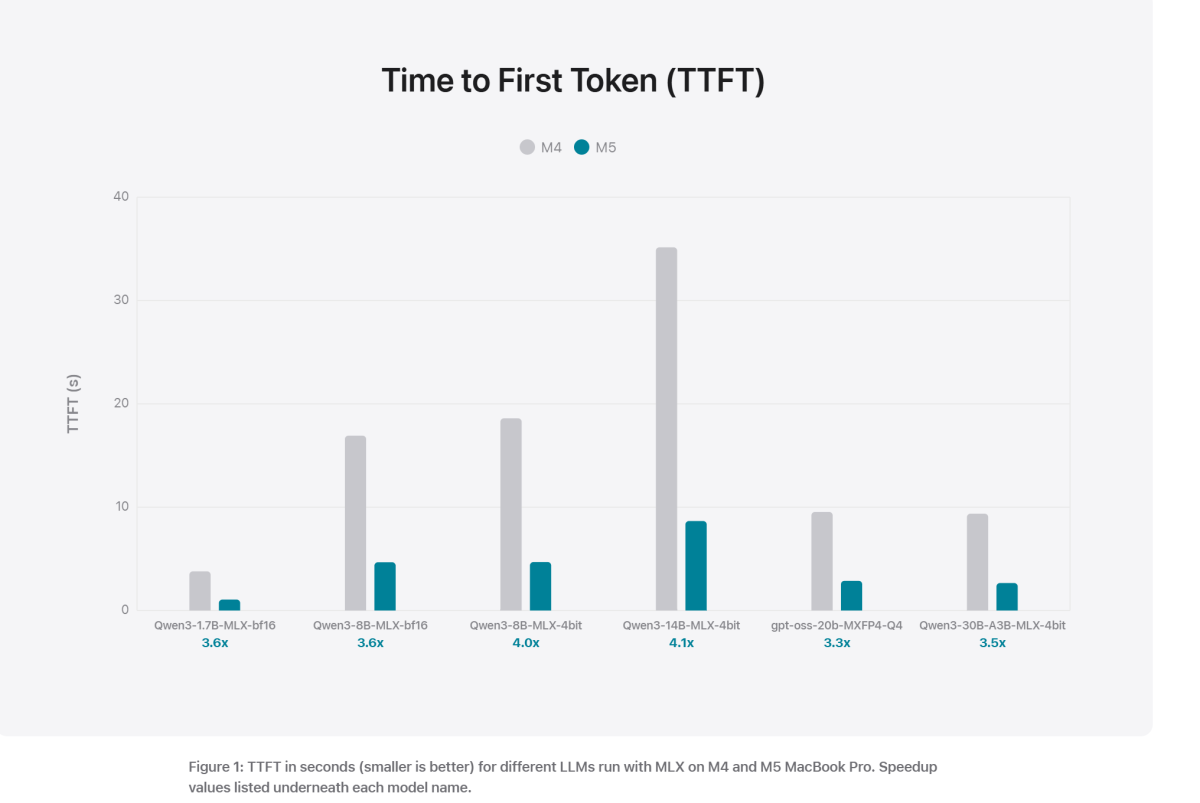

Nonetheless, Apple says that the “time to first token” (how shortly the LLM responds to your enter) is dramatically quicker, each within the desk above and within the chart under.

Personally, my LLM use hasn’t actually been gated by how briskly the LLM is. I like a fast response, however the dot matrix-printer-esque approach during which an LLM generates textual content could make it tough to learn. The sophistication of the response issues extra.

Can the Home windows world sustain?

To be honest, Microsoft has one thing comparable in idea shifting via its pipeline: Home windows ML, which takes benefit of essentially the most highly effective silicon obtainable to the PC to run native AI functions. It mainly says that you simply don’t want an NPU, simply no matter is essentially the most highly effective, obtainable part in your PC. Which strategy is healthier? I truthfully don’t know, although correct testing ought to reveal the reply.

AMD’s Ryzen AI Max+ is an AI powerhouse, and possibly Apple’s closest challenger. AMD throws 80MB of cache at AI drawback with the AI Max+ 395, and including reminiscence to processors has been a method it’s used to glorious impact.

Nonetheless, I’ve seen anecdotal studies that Apple Retailer workers have been shocked by the Mac Minis shifting out the door. However the small, compact little containers have confirmed fairly helpful to builders trying to run LLMs or agentic AI regionally, with out chewing via both tons of energy or an AI token subscription. Apple’s new MacBook Execs merely add a display screen.

So far as I can inform, nevertheless, the Mini wasn’t designed with AI in thoughts. With Apple’s administration now conscious that the Mini is a most popular AI machine, it is going to be fascinating to see what occurs with the anticipated Apple 2026 Mac Mini with an M5 chip inside. All of it definitely units a excessive bar for AMD, Intel, and Qualcomm to satisfy.